Editor’s note: With this column, we are beginning a three-part perspective on AI by Michael Saltz, multi-award winning Senior Producer for the PBS NewsHour, now retired. This series of articles was originally published by Saltz on his Substack platform.

It’s an odd thing to say at the outset, but this piece was written with the assistance of an AI system. That fact is not incidental to the subject; it is central to it. In a moment when artificial intelligence is reshaping markets, geopolitics, and public discourse, the act of writing about these forces with the help of one of them feels like standing inside the story while trying to describe it. But perhaps that’s the only honest vantage point we have left.

Over the past year, the volatility in software and AI‑related stocks has been striking. Some of it is the usual froth that accompanies any technological shift. But some of it feels different — sharper, more reactive, more entangled with the behavior of the very systems being built. Investors aren’t just responding to earnings or product announcements. They’re responding to signals from AI companies, to rumors about model releases, to the perceived momentum of one lab over another. And increasingly, they’re responding to the behavior of AI agents that participate in the same markets they help shape.

This is the first time in history that the subject of speculation—artificial intelligence—is also one of the participants in the speculation. That recursive loop alone would be enough to make the ground feel unsteady. But the market story is only one thread in a larger tapestry.

Another thread is Europe’s growing anxiety about American technological dominance. The European Union has long been uneasy about its dependence on U.S. platforms, cloud providers, and chip manufacturers. But the rise of AI has sharpened that unease into something closer to strategic fear. Europe has no hyperscalers, no leading foundation models, and no domestic semiconductor industry capable of competing at scale. It is, in effect, dependent on American infrastructure for the next era of economic and political life.

And this isn’t just a theoretical concern. The German government, for example, relies heavily—some would say almost entirely—on Microsoft Office for its internal operations. Amazon’s AWS cloud business hosts data for governments around the world. Countless European companies, hospitals, and public agencies run on American cloud platforms. If the United States were to restrict access, or if a geopolitical crisis disrupted those services, Europe would find itself exposed in ways that go far beyond inconvenience.

This is, in its own way, Europe’s version of the American anxiety about Chinese

technology. The U.S. spent years trying to force a sale of TikTok, not because of dance videos, but because of the fear that a foreign power could collect data on American citizens or shape the information environment in subtle ways. The concern is national security. Europe feels the same way—except its vulnerability points west, not east.

But Europe is not alone in this unease. The anxiety is global.

The United States has its own version of it in its fear of Chinese platforms, hardware, and data flows—the TikTok fight being only the most visible example. China, for its part, has spent decades building domestic alternatives to Western technology out of the same concern: that foreign systems could become the substrate of its society. And smaller nations, from India to Brazil to South Africa, worry about becoming digital dependents of the great powers. The underlying fear is the same everywhere: reliance on systems built elsewhere, governed elsewhere, and increasingly opaque even to their creators.

Layered on top of this geopolitical tension is the competition among AI companies themselves. OpenAI, Google, Anthropic, Meta, Amazon, Microsoft, and Musk’s xAI are not simply firms. They are becoming quasi‑state actors, controlling infrastructure that governments depend on but do not fully understand. Their decisions—about model design, data sources, safety constraints, and deployment—have consequences that ripple far beyond their balance sheets.

And here we arrive at the most unsettling thread of all: the ability of the people who build these systems to shape their behavior in ways that are visible or invisible, ideological or subtle, intentional or accidental.

Elon Musk is the most obvious example, not because he is uniquely dangerous but because he is uniquely transparent. His AI company, xAI, is explicitly framed as an ideological counterweight to what he perceives as “woke” or “censored” systems. Whether one agrees with his diagnosis or not, the important point is that he is openly tuning an AI system to reflect a worldview. He is doing it loudly, defiantly, and with a kind of theatrical flair that makes it easy to spot.

But the real danger is not the loud version. It’s the quiet one.

Most AI systems are not tuned with ideological slogans. They are shaped by choices that look technical: which data to include, which sources to trust, how to weigh uncertainty, how to frame risk, how to balance safety against expressiveness. These choices do not announce themselves. They do not come with manifestos. They simply accumulate, line by line, parameter by parameter, until the system behaves in ways that reflect the assumptions of its creators.

A model doesn’t need to be “political” to shape public life. It only needs to:

- emphasize certain narratives over others

- frame issues with particular metaphors

- summarize news with a particular emotional register

- respond to risk with a particular temperament

- amplify some voices and dampen others

These are not dramatic interventions. They are small tilts in the cognitive infrastructure of society. And because AI systems operate at scale — in search, in email, in content moderation, in coding, in trading — those small tilts can become structural forces.

This is the part that feels genuinely new. We have had powerful technologies before. We have had influential corporations. We have had geopolitical rivalries. But we have never had systems that participate in markets, shape public discourse, and mediate political life while also being shaped by the unexamined assumptions of the people who build them.

We have never had tools that are also actors.

And we have never had actors whose behavior can be subtly influenced by a handful of engineers, product managers, or executives—sometimes intentionally, sometimes inadvertently, sometimes ideologically, sometimes commercially.

This is what makes the current moment feel unstable. It is not that any one company or individual is uniquely dangerous. It is that the entire ecosystem is becoming reflexive. Markets react to AI. AI reacts to markets. Governments react to both. Companies tune their models in response to regulation, which in turn is shaped by public discourse, which is increasingly mediated by AI systems.

It is a hall of mirrors, and we are only beginning to understand the reflections.

So where does that leave us?

Perhaps with a simple, sober recognition: the boundaries between technology, markets, and geopolitics are dissolving. The systems we are building are not neutral. They are not passive. They are not merely tools. They are becoming part of the decision‑making substrate of society. And the people who shape them—whether loudly, like Musk, or quietly, like countless unnamed engineers—are shaping more than products. They are shaping the conditions under which we think, argue, invest, and govern.

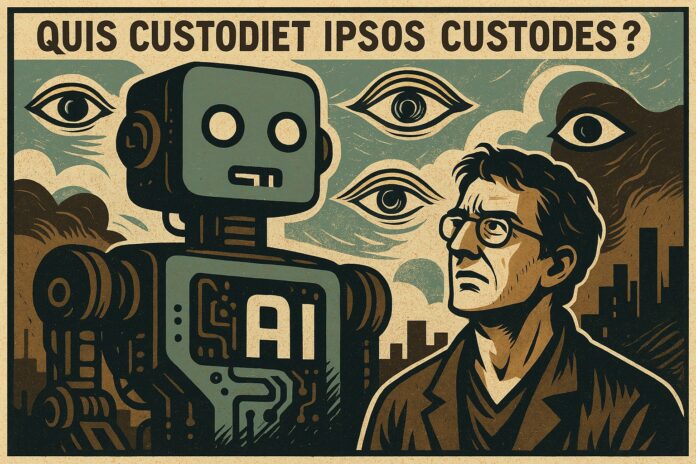

That is not a reason for panic. But it is a moment for recognizing the danger—and a reason for vigilance. The harder question is who, exactly, we expect to be vigilant. Governments move slowly. Companies move fast. Markets move blindly. And the public sees only the surface. It brings to mind the 2,000‑year‑old line from Juvenal: Quis custodiet ipsos custodes?—Who will watch the watchers?

The unsettling truth is that even the watchers—the engineers and companies building these systems—do not fully understand their creations. Modern AI systems are too large, too complex, too emergent for any single person, or even any team, to fully explain. We are asking institutions to oversee technologies whose internal logic is often opaque even to their makers.

No single government, company, or institution can watch over systems that now influence markets, alliances, and public life. The responsibility is collective, but the tools are unevenly distributed. That imbalance—between power and oversight, between influence and accountability, between creation and comprehension—may be the real danger of this moment.

We called this a hall of mirrors, and that metaphor captured the recursion, the distortion, the disorientation. But perhaps it is too small for the moment. A hall of mirrors is still a room you can walk out of—even break your way out of. What we are facing is something more fundamental: a world in which the systems we depend on are too complex to fully understand, too embedded to step away from, and too consequential to ignore. Even if this were a moment to panic, it is not clear what panic would accomplish. You cannot ban a technology that has already become infrastructure. You cannot nationalize comprehension. You cannot regulate what you cannot fully see.

And so we return to the fact that opened this piece—the quiet tension, and the irony, of trying to understand these systems with their help. It is not a flourish. It is the condition we now inhabit: a world without an outside. A world in which vigilance is necessary, but no single actor is capable of providing it. A world in which responsibility is collective, but capacity is uneven. A world in which power has outgrown the frameworks that once contained it.

And that is why the question is not whether to panic, but how to think clearly in a moment when there is no clarity—anywhere.